A couple of days back I heard about an interesting new startup called Taalas, and they are doing some interesting work in the LLM inference space. I thought we’ll try to deep dive on what I found about that today.

They take a trained model like Llama 3.1 8B and turn it into a fixed chip. Instead of a GPU pulling weights from High Bandwidth Memory(HBM) every token, the weights are baked into silicon in a big ROM like fabric. The model and the hardware are basically the same thing, so you are really buying a specific model in PCIe card form.

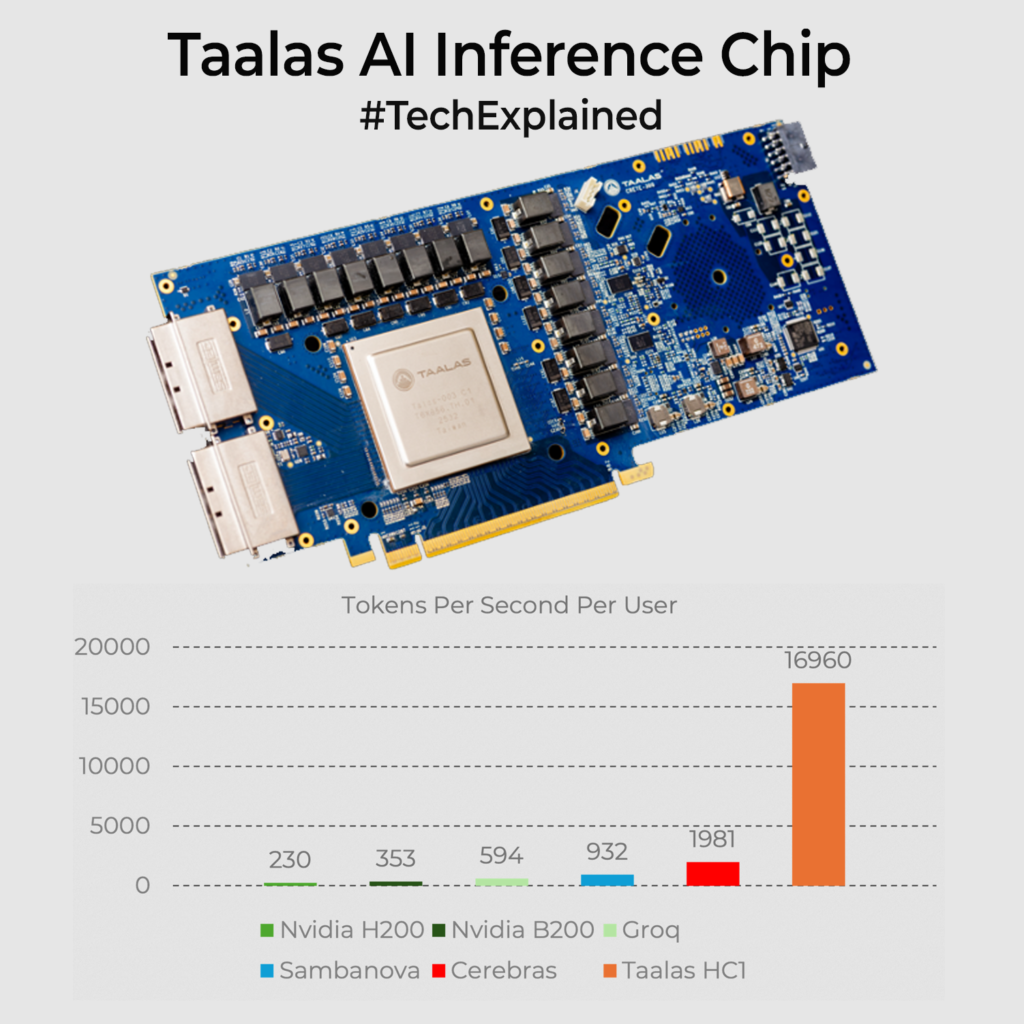

On their first part, HC1, they talk about roughly 17k tokens per second per user for that 8B model at around 200W power. The nearest competitor is the Cerebras chip which does around 2k tokens per sec. With every weight on the die and no HBM in the loop, most of the work becomes local switching instead of moving GBs back and forth. To visualize it, think of a grid where each weight is a tiny logic cell, not a number in memory. When an activation vector arrives, that grid lights up along fixed paths and each cell contributes its small multiply and add. The layer output appears with almost no indexing overhead. SRAM around it holds KV cache and adapters, so you can still add LoRA style tweaks. Try out at chatjimmy.ai It’s mind-blowingly fast, the moment you hit the Enter key.

Where could that be useful? Anywhere you are happy to standardise on a stable model and really care about latency and cost per query. Meaning robots, edge devices, superfast agentic frameworks etc.

Am I really convinced if this is the way ahead? I am not sure. Since it’s baked into the Silicon, you loose flexibility when you want to change things. You need big deployment volumes to make the economics work. By the time a tape out happens(they say 60days), the next generation model would be out there. Also not sure how it scales for large Trillion+ token models. A classic case is for stable popular older models like ChatGPT 4o if its weights are open sourced, its fanboys would love to bake it with Taalas and use for deployment since OpenAI sunsetted the model last week. There is potential lets see where this goes.

0 Comments

Comments are closed.